The ecosystem was first introduced to the makeup of the CDN SuperPoP when Fastly published their hardware specs for everyone to see. For many, what was once an extremely well guarded secret was no longer a secret, and some CDNs followed suit by revamping their infrastructure to make it more Fastly like. This year, servers supporting 1TB of RAM and NICs with dual-port 100Gbps will become a reality. Translation – individual caching servers will be able to support 200Gbps of throughput, a 5x to 10x increase over existing servers being used in CDN environments. Some might say existing servers and switches housed within CDN racks are good enough, and that the throughput bottleneck lies with the carriers who provide transit to CDNs, but that argument isn’t valid anymore.

The 100Gbps Internet Ports are available today throughout many locations around the world. Thus, the bottleneck is no longer on the carrier side. The 100Gbps Internets Ports are a mind-blowing game changer for the CDN SuperPoP, which we’ll call the Next-Gen CDN SuperPoP.

The Next-Gen CDN SuperPoP is coming to town and infrastructure folks working in the space better start planning on the inevitable – overhauling the network. Its been said that the Linux Kernel and other pieces of the software stack won’t be able to support 200Gbps per box. We’re sure everyone is going to be different.

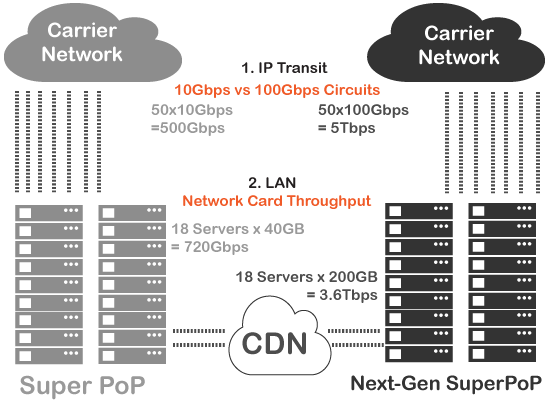

Now let’s do some simple comparison math for an imaginary CDN with 18 servers per PoP, before and after the overhaul.

- 1 Server with dual-port 100Gbps NIC = 200Gbps / server

- LAN Throughput: SuperPoP w/ 18 servers x 40Gbps = 720Gbps vs Next-Gen SuperPoP w/ 18 Servers x 200Gbps = 3.6Tbps

- WAN Throughput: SuperPoP w/ 50 Internet Ports x 10Gbps = 500Gbps vs Next-Gen SuperPoP w/ 50 Internet Ports x 100Gbps = 5Tbps

That’s a crazy throughput increase of 5x on single server and 10x increase of internet access over single circuit

SuperPoP vs Next-Gen SuperPoP