In the world of CDN 3.0 architecture, caching engineers, networking engineers and full stack engineers will work alongside mathematicians and data scientist. The feature set will expand into countless variations, a reflection of the type of algorithms used. As of today, there are thousands of algorithms out there. Manual task like cluster performance tuning, network tuning, and so on, will take a back seat to machine learning (ML) tuning.

Machine learning changes the tuning game from manual tuning to self tuning, all the while learning in the process. Even personalization will take on new meaning. For example, a new personalization feature leveraging ML algorithms will capture visitor site activity, then use the activity as inputs to a classifier, which will then build patterns on the training data. Thereafter, every time a visitor visits the site, the ML algorithm makes a prediction as to what page results in the most sales and delivers it.

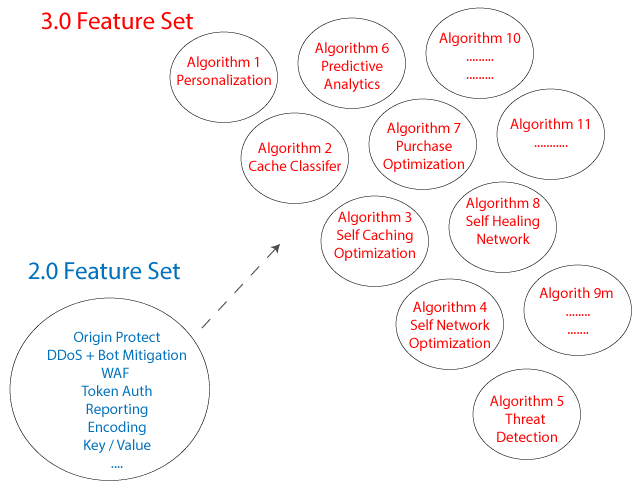

2.0 Feature Set vs 3.0 Feature Set

And what about sales organizations; they will have to be retrained in a whole new tech language that involves acronyms from AI, machine learning, big data, DevOps, data science, statistics and applied math. Today, the word machine learning is just a buzzword used by marketing departments. In due time, the machine learning conversation will change in the CDN industry as more folks become educated on the minute details such as what algorithms do what, the purpose of each class of algorithms, the differences between various neural networks, and so on.

For example, today some edge security startups are touting their threat platform as being machine learning driven, which leverages supervised learning algorithms. The competitive response to that statement would be that unsupervised learning is actually a better model than supervised learning when it comes to detecting threats. Thereafter, point the potential customer to the dozens of academic studies on the subject.