Facebook’s computing demands are on the increase and in order to meet them, it has introduced a new solution: the Fabric Aggregator.

Developments in immersive entertainment, such as live video, virtual reality and 360 all require additional capacity. This, along with Facebook’s continued expansion into AI, machine learning and other areas, are stretching even the highest-capacity networking switches currently on the market to their tipping point. Its AI and machine learning applications require huge amounts of data to be shuffled between servers within a region, even more than that which travels between Facebook’s global network and the computing devices of its 2.1 billion users worldwide.

To meet demand, Facebook is building new data in more parts of the world, but it is also building more data centers per location. The social networking company recently announced it would soon begin expanding its Papillion, Nebraska data center campus from two buildings to six.

Furthermore, at the OCP summit last week in San Jose, California, Sree Sankar, technical product manager at Facebook, discussed the scaling challenges visible ahead and introduced their latest technical solution, the “fabric aggregation layer”.

In late 2016, Facebook realized it needed triple the number of ports it then had, but merely scaling network capacity linearly would not be possible from the perspective of power needs within its data centers. The company understood that it needed to create a more energy efficient solution.

“We were already using the largest switch out there,” Sankar said. “So we had to innovate.”

Its developers worked on building a distributed, disaggregated network system, called Fabric Aggregator. It solved many of the challenges around energy efficiency and scalability, and also offered improved network resiliency. The approach enables the scaling of aggregation-layer capacity in large chunks.

Fabric Aggregator took the Facebook team about five months to design, allowing the company to begin rolling it out in its data centers across the last nine months.

In a blog post introducing Fabric Aggregator, Sankar’s team described the benefits of taking a disaggregating approach. It “allows us to accommodate larger regions and varied traffic patterns, while providing the flexibility to adapt to future growth”.

Fabric Aggregator can handle both traffic that flows between buildings in a region (“east/west traffic”) and traffic that exits and enters a region (“north/south traffic”). Both types of traffic are continuing to grow at different speeds as Facebook’s regions continue to scale up, and more immersive experiences are offered. Using a large, general-purpose network chassis no longer met the needs of the platform.

source: Facebook

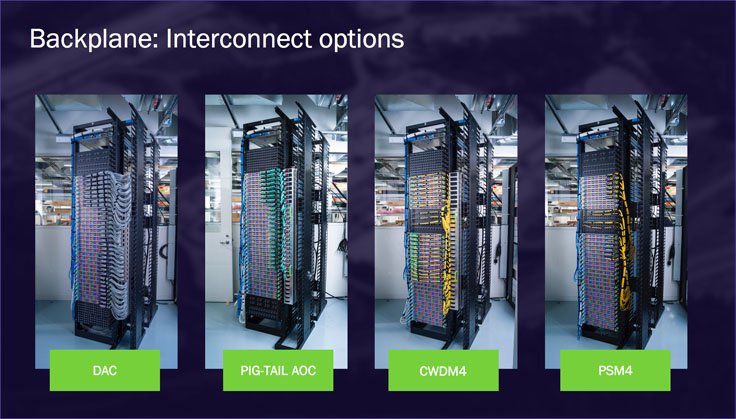

Fabric Aggregator interconnects many of the Wedge 100S switches the company already had in place, and developed a cabling assembly unit to emulate the switch chassis backplane. This approach allows Facebook to scale capacity, interchange building blocks and modify the cable assembly as its requirements change. Furthermore, as the Wedge 100S system is made up of many of the same building blocks, any of the switches can go out of service, whether deliberately or accidentally, without impacting the overall performance of the network.

Each Fabric Aggregator node implements a 2-layer cross-connect architecture. Each layer servers a different requirement:

- The downstream layer switches regional traffic.

- The upstream layer switches traffic to and from other regions. The main purpose of this layer is to compress the interconnects with Facebook’s backbone network.

Both layers can hold a quasi-arbitrary amount of downstream subswitches and upstream subswitches. By separating the solution into two different layers, the company can scale east/west and north/south traffic separately simply by adding more subswitches as traffic demands.

Fabric Aggregator and the Wedge 100S runs on Facebook Open Switching System (FBOSS) software. These base building blocks allow the solution to run BGP between all subswitches. The design is distributed without a central controller. Each subswitch operates independently; and all subswitches are interchangeable at the hardware and software level.

“The ability to tailor different Fabric Aggregator node sizes in different regions allows us to use resources more efficiently, while having no internal dependencies keeps failures isolated, improving the overall reliability of the system”, the Facebook team wrote.

Sankar and her colleagues are already aware that hitting the limits of capacity through Fabric Aggregator is on the horizon. “It’s unsustainable for a 400-Gig data center,” Sankar said, and encouraged networking vendors to accelerate development in this area. A recent Dell’Oro Group forecast said the transition from 100G to 400G will start in 2019, increase pace in 2020, and surpass 100G in total amount of bandwidth deployed in 2022.