Habana Labs, an innovative startup has developed an AI processor designed specifically to run deep neural networks (DNN). The software-programmable processor is available as a PCIe card and mezzanine card. The AI processor works for both training models and inference (trained). Simply plug the PCIe card into a commodity data center server and it turns into an AI workstation that outperforms a GPU-based system.

Although GPUs do an excellent job of running DNN models, they were designed as a general-purpose card, able to run many different types of games, besides running AI models. As such, they are not perfectly suited for AI workloads. Habana created a “Pure AI” card to accelerate the training and inference phases. Training requires more computational horsepower because data moves forward through the layers in the DNN model and then backward (backward propagation), many times over until model accuracy reaches the desired objective. Habana is an alternative to the Google Cloud TPU and it’s ideal for enterprises that are DIY (do-it-yourself) AI shops.

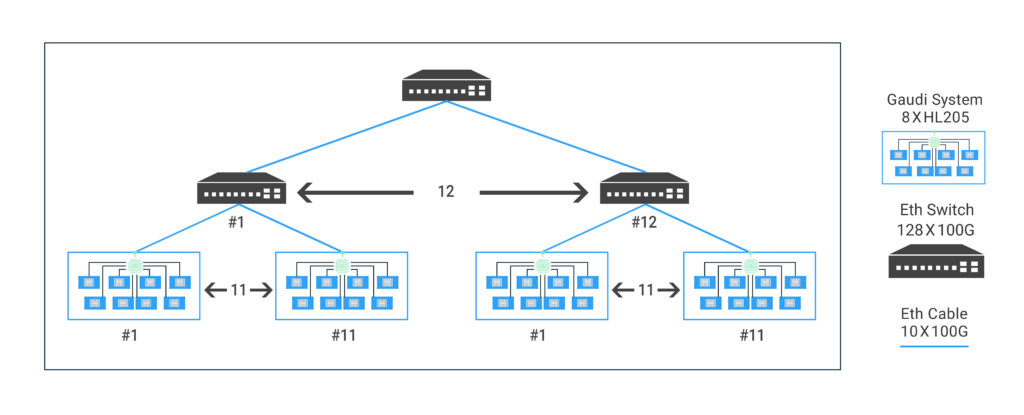

Scale-Out

When it comes to running AI models at scale, using more than 16 GPUs creates a bottleneck. However, Habana AI Processors can be daisy-chained together to support 1,056 cards across a cluster of servers. The best part, the cards support Ethernet.

source: habana

Besides the scale-out capabilities, the cards out-perform GPUs by a large margin. In one benchmark, Nvidia published a stat that shows 8 Tesla’s V100 GPUs doing the work of 169 CPU servers in processing 45,000 images/second (inference). However, three Habana AI cards do the work of 8 Tesla’s V100. So not only is Habana more cost-effective, less power is used in running AI models.

AI Processor Specs

Habana offers two AI processors 1) HL-205 and 2) HL-20X. Both come with an HL-200 processor that contains 8 TPC’s (Tensor Processing Cores). The specs are summarized below:

- Two Cards

- HL-205 mezzanine card: 300w

- HL-20X PCIe: 200w

- Each card contains a single HL-200 process with 8 TPC’s (Tensor Processing Cores)

- Works on commodity data center servers

- Ideal for recommendation systems and image recognition models

- AI Processors are fully programmable

- 1 TB/s memory bandwidth

- Supports training and inference

- Comes with 10x100Gbps ports

- On-Chip RDMA (Remote Direct Memory Access)

- Supported AI frameworks: PyTorch, Caffee2, TensorFlow, Mxnet, MCT, and more coming

Background

- Company: Habana Labs

- Founded: 2016

- HQ: Tel Aviv

- # of Employees: 23

- Raised: $75M

- Founders: David Dahan (CEO) and Ran Halutz (VP of R&D)

- Product: AI Processors for deep neural networks